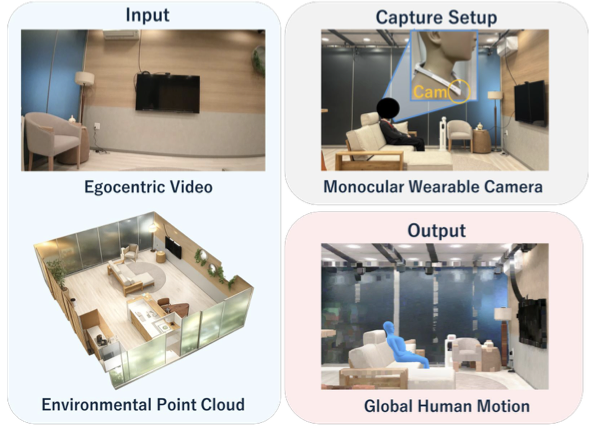

Map-Mono-Ego achieves globally consistent human pose estimation solely from a monocular camera by leveraging a pre-scanned 3D point cloud.

Abstract

Monocular egocentric human pose estimation is essential for ubiquitous activity monitoring. However, understanding the user's absolute location within the environment remains a challenge. Existing methods primarily focus on relative motion from an initial position, and tend not to account for the wearer's absolute location within an environment.

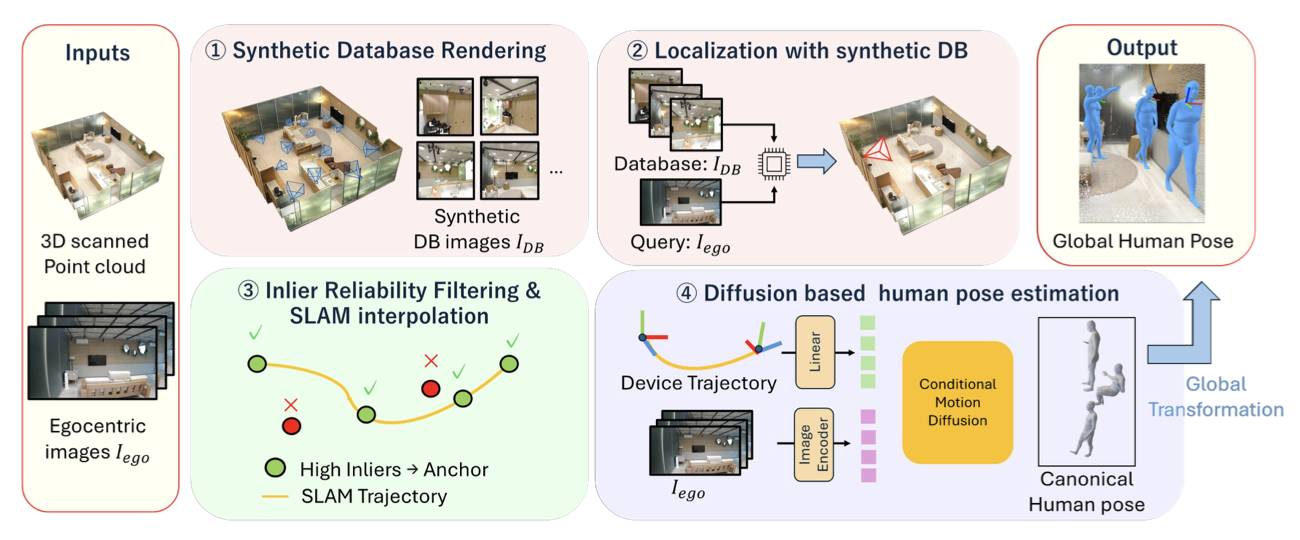

Furthermore, inherent scale ambiguity in monocular vision leads to severe translational drift, limiting long-term tracking without specialized multi-sensor hardware. To address this, we propose Map-Mono-Ego, a novel framework achieving globally consistent pose estimation solely from a monocular camera by leveraging a pre-scanned 3D point cloud.

We also introduce AIST-Living dataset, a new dataset pairing egocentric video with ground-truth motion in a scanned environment. Experiments demonstrate that our approach significantly outperforms the state-of-the-art baseline, proving its utility for practical monitoring tasks without specialized hardware.

Method Overview of Map-Mono-Ego